AI-Generated Content: Ready for Primetime?

Written by Anthony Hyun

Director Technology – UI/UX

February 7, 2023

One of the hottest topics surrounding content is text generators and AI-generated content. Sounds almost too good to be true. You feed in your attributes, style, guardrails, and voilà, instant content! But hold on, is that content ready for primetime? Will AI-generated content support your content strategy and help you build your brand? Maybe.

An excellent approach to answering this question is to look back to the beginning, the conception of your content strategy. Remember when you pondered this question, “What content should we create and why?” The answer is straightforward. Any effective content strategy should support these goals:

- Attract a defined target audience

- Inform that audience about your business

- Engage and educate them

- Generate leads and sales

- Turn your audience into customers, fans, and advocates

What is the connective tissue that holds all these goals together? Trust. Trust is the builder of brands and the key ingredient for lasting customer loyalty. Trust is elusive and difficult to describe, but once you experience the power of trust, you can’t ignore it. Customers who trust brands will buy their products and recommend them to friends. Loyal customers will forgive brands when they make mistakes and allow them to become part of the fabric of their lives.

Brands build trust with customers by being truthful and reliable. Unfortunately, these are the two attributes that text generators have not yet mastered. Text generators achieve their magic by consuming massive amounts of data, and through pattern recognition, they are particularly proficient at predicting the next word in a sequence. In essence, it’s an auto-complete program for composing a poem about changing a spark plug in the style of Robert Frost.

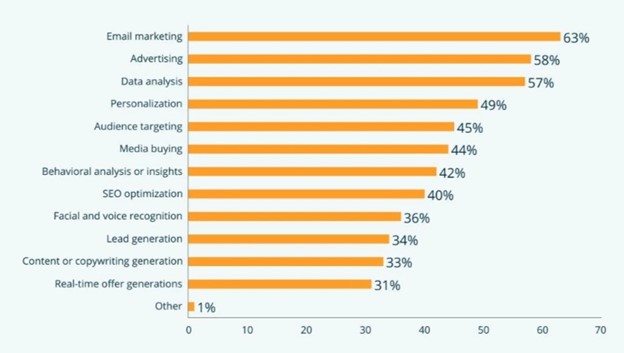

Areas in which Companies are Leveraging AI and ML Tools

The shortcoming of text generators is the need for more understanding. AI lacks awareness; it cannot comprehend what it’s creating. AI has no idea if it’s telling the truth. It simply provides an answer based on probability and data consumed. This can lead to trust issues, mainly if you publish content about a controversial topic prone to disinformation. The New York Times recently published an article supporting this point. Gordon Crovitz, a co-chief executive at NewsGuard noted that, “this tool (ChatGPT) is going to be the most powerful tool for spreading disinformation that has ever been on the internet.”

Another area of improvement for text generators is reliability. Remember, text generators don’t stop consuming data. Many believe that building more extensive neural networks will lead to more intelligent machines and fewer mistakes, but this may not always be true. Consider the above example, a controversial topic prone to disinformation. The events preceding and following the 2020 election produced massive pro and con content. Even a well-intentioned text generator with guardrails could be inconsistent.

Sounds grim. Is there no place for AI-generated content? Yes, many areas can gain efficiencies with AI, but you need a human editor. You can’t just turn on a text generator and walk away. I was responsible for all the marketing and product content for four prominent sports apparel brands. So much time could have been saved using a text generator to write rough copy for everything from responsive emails to product copy. The keyword is “rough.” You will always need a copywriting professional to confirm accuracy and brand voice.

The other area I see significant potential for AI-generated content is for generating raw ideas. I’m sure many of you have participated in brainstorming sessions. A well-run brainstorm will produce as many “seed ideas” as possible initially, then groom the ideas later. AI could significantly contribute to these seed ideas by seeing different combinations and scenarios at lightning speed.

Text generators and AI-generated content are inspiring breakthroughs and will find their place. Gary Marcus, an emeritus professor of Psychology and Neural Science at NYU thinks that when neural networks are combined with symbol manipulation, some of humanity’s outstanding questions may be answered. That said until text generators can demonstrate true AGI tendencies (Artificial General Intelligence: being able to think like a human), they are not ready to create truthful, reliable, original, or insightful content without supervision. AI needs to be able to create intelligent rules that determine thoughtful answers and not rely purely on data. Otherwise, autonomous cars will continue to drive into unexpected, parked jets because they can’t make the connection between large curvaceous objects and expensive things not to hit.

Recent Blogs

- Middleware is often considered the glue that binds different systems and connecting platforms, and it …Read More »

- Learn to write effective test cases. Master best practices, templates, and tips to enhance software …Read More »

- In today’s fast-paced digital landscape, seamless data integration is crucial for businessRead More »