Hadoop and NoSQL

What is Hadoop?

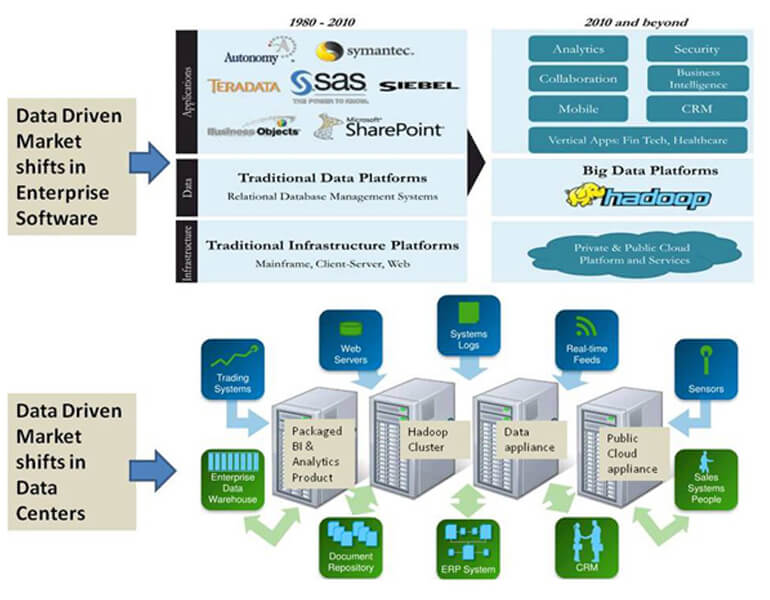

- Hadoop is a new way for enterprises to store and analyze data powered by Apache.

- A scalable fault-tolerant grid operating system for data storage and processing

- Its scalability comes from the marriage of

- HDFS: Self-Healing High-Bandwidth Clustered Storage

- MapReduce: Fault-Tolerant Distributed Processing

- Operates on unstructured and structured data

- A large and active ecosystem (many developers and additions like HBase, Hive, Pig, …)

- Open source under the friendly Apache License.

NoSQL Component

Announcing their entry into the big data market at the end of 2011, Oracle is taking an appliance-based approach. Their Big Data Appliance integrates Hadoop, R for analytics, a new Oracle NoSQL database, and connectors to Oracle’s database and Exadata data warehousing product line.

Oracle’s approach caters to the high-end enterprise market, and particularly leans to the rapid-deployment, high-performance end of the spectrum. It is the only vendor to include the popular R analytical language integrated with Hadoop, and to ship a NoSQL database of their own design as opposed to Hadoop HBase.

Rather than developing their own Hadoop distribution, Oracle have partnered with Cloudera for Hadoop support, which brings them a mature and established Hadoop solution. Database connectors again promote the integration of structured Oracle data with the unstructured data stored in Hadoop HDFS.

Oracle’s NoSQL Database is a scalable key-value database, built on the Berkeley DB technology. In that, Oracle owes double gratitude to Cloudera CEO Mike Olson, as he was previously the CEO of Sleepycat, the creators of Berkeley DB. Oracle are positioning their NoSQL database as a means of acquiring big data prior to analysis.

The Oracle R Enterprise product offers direct integration into the Oracle database, as well as Hadoop, enabling R scripts to run on data without having to round-trip it out of the data stores.